What is mixed content?

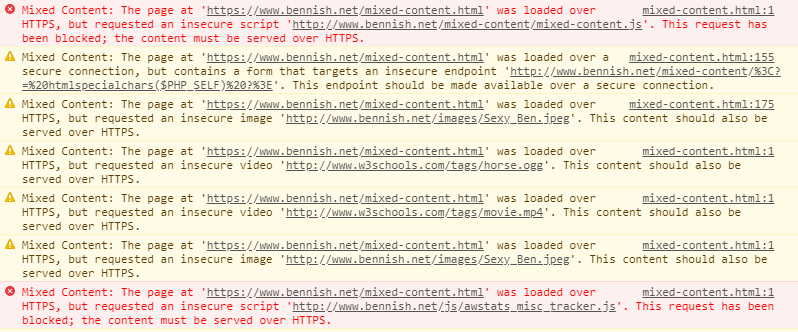

Mixed content is the term used to describe pages which are loaded over a secure HTTPS connection, but which request other assets – such as images and scripts – over insecure HTTP connections. Mixed content can be either active or passive, and different browser versions handle these security risks in different ways (modern browsers often block the requests completely). You can read more about mixed content here on Google Fundamentals, and experiment with a real-world example here – be sure to check the JavaScript console.

It’s worth emphasising that identification of insecure resources is a worthwhile exercise whether or not you’ve already moved to HTTPS.

- If you’re trying to salvage a botched migration, securing these requests is essential if you’re to close off vulnerabilities and ensure your site behaves correctly.

- If you’re yet to migrate, securing resources is a great step towards future-proofing your site in readiness for an HTTPS migration. As we shall see, in many cases this can be done instantly and at zero expense.

Identifying mixed content manually can be time-consuming, but with the right tools you can make your life easier.

Tackling mixed content with Lighthouse

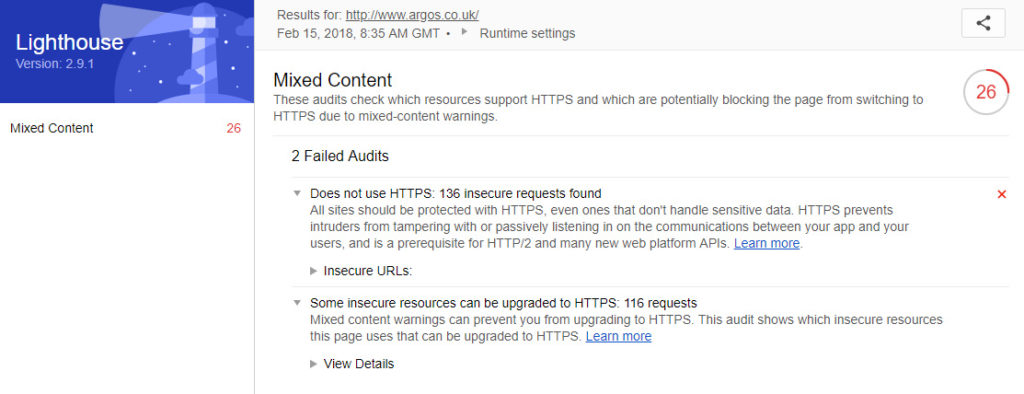

Google’s rapidly advancing Lighthouse tool has been equipping site owners with the tools they need to make protocol migrations as painless as possible. While often associated with performance testing for progressive web apps, Lighthouse has become a very good high-level benchmark for accessibility, security, usability, and modern best practices.

Released last week, version 2.8 introduced the Mixed Content audit. This new audit is not run by default in Lighthouse. You’ll need to run the command line version of the tool and install Chrome Canary.

If you’re new to the command line version, you’ll need to install Node.js. If you’re on Windows, you’ll want to enable the Windows Subsystem for Linux or get Git Bash (included with Git for Windows) to ensure a usable command line environment.

Install lighthouse from the node package manager:npm install -g lighthouse

Next, head to your working directory and run lighthouse with the –mixed content flag to generate the report:lighthouse --mixed-content --view https://www.example.com/

The HTML report will open in your default browser. The results are helpfully divided into two categories:

The first is essentially Lighthouse’s standard HTTPS test, and it provides a list of all insecure resources (images, stylesheets, JavaScript, etc) which the page is calling. These can be exported as JSON for convenience.

The second category is the most useful. This shows insecure resources which are easily upgradeable to HTTPS (i.e. the domain in question already has a valid SSL certificate). In many cases these will be resources loaded from a third-party CDN which are hard-coded to use the HTTP protocol. For example:

<script src="https://ajax.googleapis.com/ajax/libs/jquery/3.3.1/jquery.min.js"></script>These URLs can simply be changed to specify the secure protocol. On secure pages this will prevent mixed content, but it’s worth making this change on insecure (HTTP) pages too: it will tighten up security by preventing man-in-the-middle attacks and make it easier to upgrade your site to HTTPS in the near future. It’s also worth mentioning that – contrary to popular opinion – requesting secure assets from non-secure pages does not have any meaningful negative performance implications. All assets which are available securely should always be requested via HTTPS.

For resources which are requested over HTTP which cannot simply be requested via HTTPS, the situation is a little more complex. Your options will vary depending on the specifics of your setup, but in many cases you may be able to either load the resource from a different host or CDN, or host the asset on your own (secured) servers.

Finally, you might also see resources on your own domain listed in the Lighthouse report. Let’s say you’ve decided on a phased approach to your HTTPS migration, and are allowing both HTTP and HTTPS versions to resolve while you iron out any issues. The use of relative or protocol-relative URL paths will cause assets to be requested insecurely:

<link rel="stylesheet" href="//mydomain.com/style.css">While an eventual full migration to HTTPS (i.e. site-wide permanent redirects and the HSTS header enabled) will ensure these resources are requested securely, there’s nothing to stop you from upgrading these requests to HTTPS now, should you wish to do so.

Tackling mixed content with HTTP headers

By setting a Content Security Policy (CSP), it is possible to manage mixed content at scale. If you’re unfamiliar with the principles behind CSP, the articles at HTML5Rocks and the MDN are good places to start.

You can set a CSP by including the Content-Security-Policy or Content-Security-Policy-Report-Only HTTP headers in your server responses. These headers allow us to communicate to compatible browsers how we want them to handle mixed content: we can choose to block, automatically upgrade, or simply report mixed content back to us.

When life throws a challenge your way, it’s often advisable to take stock of the situation before grabbing your hammer. Mixed content problems are no exception: far better to know how many resources you’re dealing with and where they’re being found than to blindly block everything like a maniac. The following response header will instruct compatible browsers to send a JSON-formatted violation report as a POST request to a suitable endpoint every time an asset is requested via HTTP:

Content-Security-Policy-Report-Only: default-src https: 'unsafe-inline' 'unsafe-eval'; report-uri https://example.com/myReportingEndpointThese reports are JSON-formatted, and you can instruct your developer to ensure the endpoint processes them into your preferred format.

{

"csp-report": {

"document-uri": "https://mydomain.com/",

"referrer": "",

"blocked-uri": "https://ajax.googleapis.com/ajax/libs/jquery/3.3.1/jquery.min.js",

"violated-directive": "default-src https: 'unsafe-inline' 'unsafe-eval'",

"original-policy": "default-src https: 'unsafe-inline' 'unsafe-eval'; report-uri https://example.com/myReportingEndpoint",

"disposition": "report"

}

}

Once you’ve tackled the issues and are ready to start enforcing your CSP, you can opt to automatically upgrade all insecure requests to HTTPS:

Content-Security-Policy: upgrade-insecure-requestsThis is a relatively new standard (remember that CSPs are only respected by browsers that support them) but support is climbing rapidly. This header will force browsers to upgrade requests automatically, and if a particular resource is not available via HTTPS, it will not be loaded (thereby preserving security).

Finally, you may opt to block mixed content completely:

Content-Security-Policy: block-all-mixed-contentThis is fairly self-explanatory, but it’s worth noting that this directive will cascade into <iframe> elements too.

In summary…

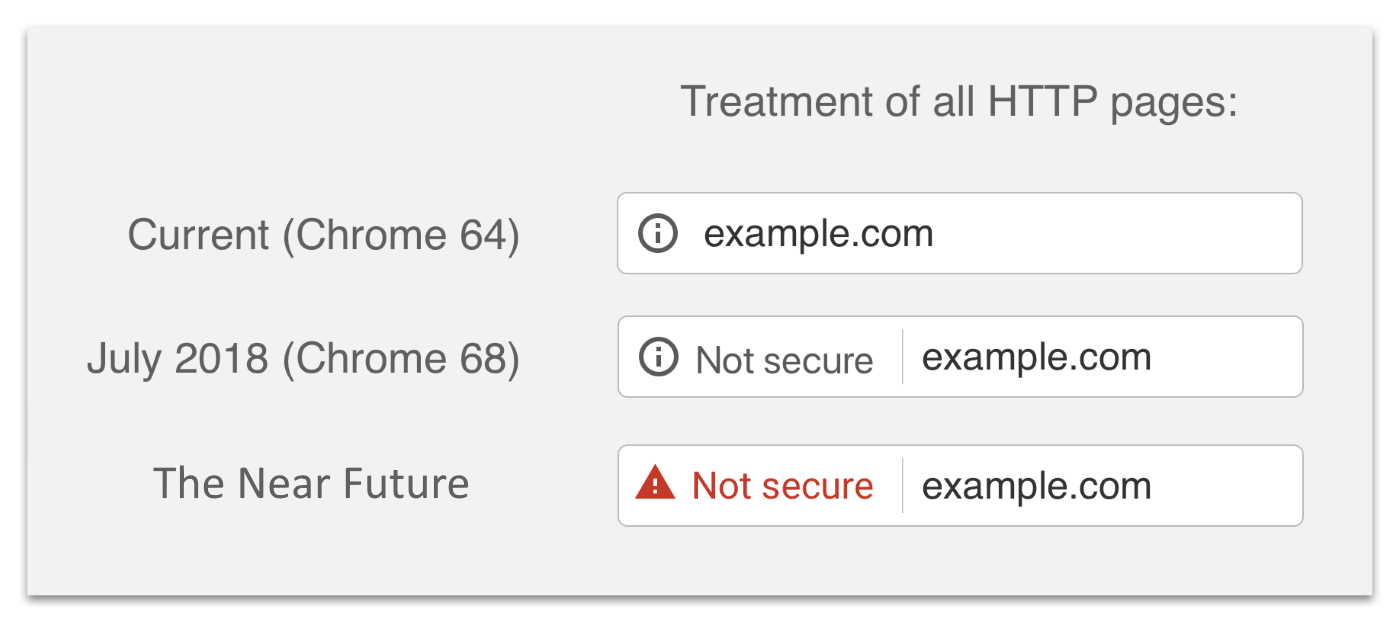

The benefits of HTTPS – and indeed the dangers of remaining on HTTP – are growing every day, but that’s not to say that a migration to HTTPS should be rushed. On the contrary, it is more important than ever that protocol migrations be executed carefully and with consideration given to SEO.

For site owners and developers who are yet to make that jump (or for anyone who’s made the jump but broken their legs on landing thanks to a carelessly placed insecure resource), the tools and techniques I’ve outlined above should help you to take positive steps towards securing your site in a smooth migration to HTTPS.

Thanks for reading!

Bertrand

Great article, thanks a lot. Do you know if the programmatic NodeJS version supports Mixed-Content scanning as well?

I’m talking about: https://github.com/GoogleChrome/lighthouse/blob/master/docs/readme.md#using-programmatically

I see that it takes options, but I haven’t found any reliable source as to what exactly goes in there if I want to scan a given webpage for mixed content.

Tom Bennet

Hey Bertrand. I haven’t done so myself, but I believe it’s possible by passing the appropriate ` –config-path`. You might want to check out this merged PR on GitHub which introduced the key functionality: https://github.com/GoogleChrome/lighthouse/pull/3953

Thanks!

Tom

APJ Smart Works

I really like your post, thanks for sharing this and looking forward to seeing more from you. – xiaomi service center in ambattur