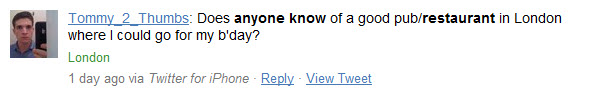

Does anyone know?

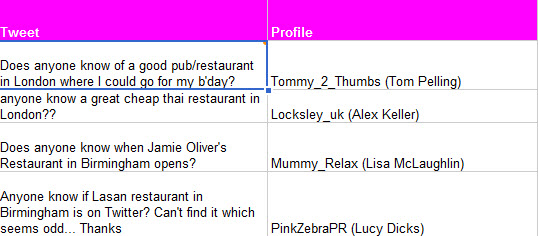

“Does anyone know” is such an interesting search on Twitter – just combine that query with a keyword, like “restaurant” and a location for everyone on Twitter looking for a very specific, thing. Great if you happen to be trading in that thing.

Try a query like this to pull through results from the Twitter search RSS feed:

=Importfeed(“https://search.twitter.com/search.atom?q=+restaurant+%22anyone+know%22+london+OR+manchester+OR+birmingham”)

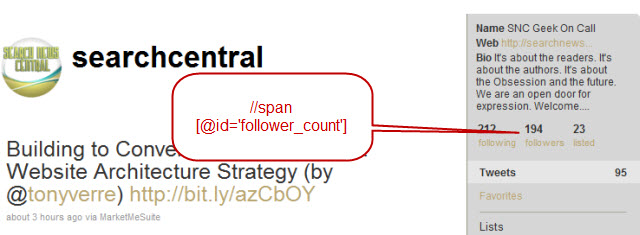

Twitter followers

A nod to Steven Foskett for this one, and particular kudos for the mention of vCard, the query for LinkedIn connections and Alexa Rank. Nice!

Try this query:

=importXML(“https://twitter.com/[your-username]”,”//span[@id=’follower_count’]”)

Which will give you the number of followers you have on your Twitter profile. I added together the total followers that my SEO team have for kicks. I wonder how long it will be before someone totals up all followers counts for all UK agencies? I wonder if there’s a correlation between that data and turnover :-)

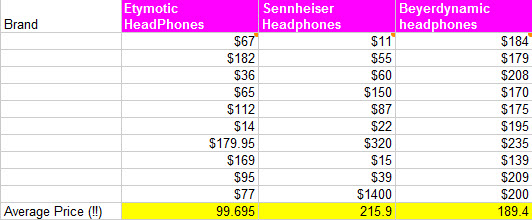

Pull price data from the web

I think that, after some mild haranguing, Will might have purchased himself a pair of Etymotic headphones. Perhaps my pitch would have gone slightly more efficiently with a little xPath and Google Product search:

For something like this, a way smarter approach to get pricing data from Amazon would be to use their API – but you get the point with this brief example.

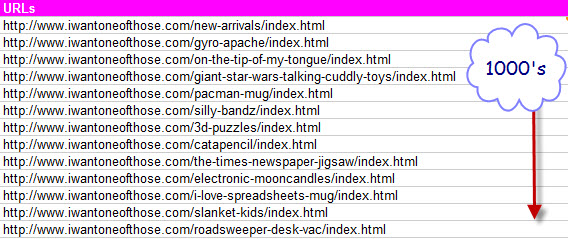

Get all of your (competitor’s) URLs from their sitemap

Try something like this:

=ImportXML(“https://www.yourcompetitordomain.com/sitemap.xml”,”//url/loc”)

I mentioned doing this with Excel to find orphaned pages, but you can have a lot more fun with importXML. For one, theoretically you could go off and fetch all keywords contained in the <title> tag of each of the URLs – an instant keyword strategy!

Have fun

This wasn’t a particularly “advanced” post – I did quite enjoy the thought of what to do next with this data, though. Fetch IP addresses, WHOIS details, root domain links or keyword research data with Google Suggest, the Alchemy API, or plain scraping your competitor home pages. If you’re using importXML, I’d really like to hear how.

Anyway, as I mentioned earlier, please feel free to take the queries from here: https://bit.ly/9Fs7aF target=”_blank” – improve them, and let me know what you did.

A little update

I got in touch with my friend Tom to see if he wanted to contribute. He’s been out in Vegas, but came back with a tip to solve the problem of Google caching a result for around two hours at a time:

“Google sheets will cache a URL for ~2 hours and so if you want to crawl a URL more often than that then you need to add a modifier to the URL.

I use int(now()*1000) to generate a unique timestamp and then add that into the URL in a dummy query string. E.g.

https://www.google.com/search?q=seattle+seo+consulting&pws=0&gl=us&time=1354333

The search results won’t change when you change the time value but Google docs will treat it as a fresh URL and crawl it again.

Also – you can do lots of amazingly fancy things using Google Scripts (kind of like macros for google docs) but don’t have a huge amount of time to go into detail about that now!”

Well, hopefully, Tom will have time soon – thanks for contributing!