Click depth vs internal links

When assessing a website architecture we often refer to ‘level’ (or click depth) which denotes how many clicks a given URL is located from the start page. As a metric this is useful for understanding the broad positioning of pages on your website, and how much value (roughly) could be passed from the home page with each hop.

However, for much larger & more complex site architectures this metric only gives us a limited view as it doesn’t reflect how accessible a page is, globally. Reducing the number of clicks between pages can be better measured by the number of internal links to a given page, and should come as no surprise that pages with fewer ‘internal’ links struggle to be crawled, indexed and ranked organically.

Putting it into practice

All you’ll need is:

- Screaming Frog – running a standard crawl of your chosen domain

- Google Analytics – to retrieve organic landing page data

You can retrieve both from the latest version of Screaming Frog (which is an amazing feature I might add), however, in some scenarios, I like to have a little more control over what API calls are being utilised as not to make unnecessary requests, particularly on a wider standard site crawl.

So in this post, we’ll be referring to the Google Analytics add-on available in Google Sheets that allows you to query pretty much anything, but extract up to 10K rows of data in a single hit.

Log into your Google Account, head to ‘Add-ons’ > ‘Get add-ons’ in the toolbar of Sheets, and search for ‘Google Analytics’. After clicking add and accepting the permissions, return to the

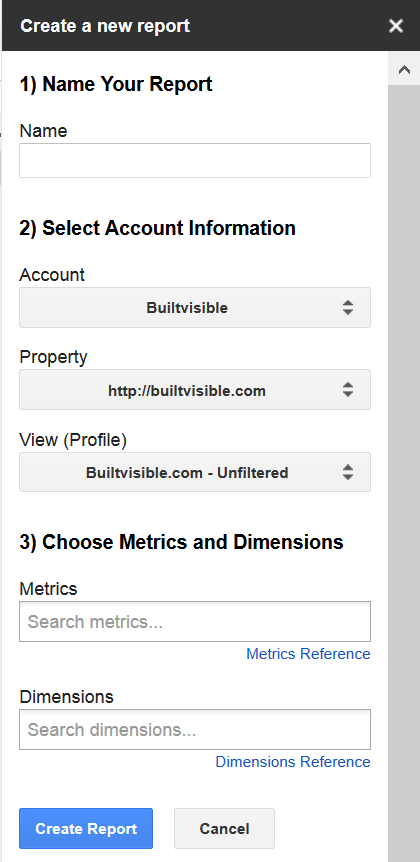

‘Add-ons’ menu and you’ll see a new option for ‘Google Analytics’, from there select ‘create a report’ and the below window is displayed:

Name the report and select a GA account & profile from the pre-populated list associated with the logged in Google account.

In the following fields you can call anything from the GA API, so you may wish to experiment at some point – for reference see the API explorer. For the purpose of this post we’re going to add:

- ‘Metrics’ = Sessions

- ‘Dimensions’ = Landing Page

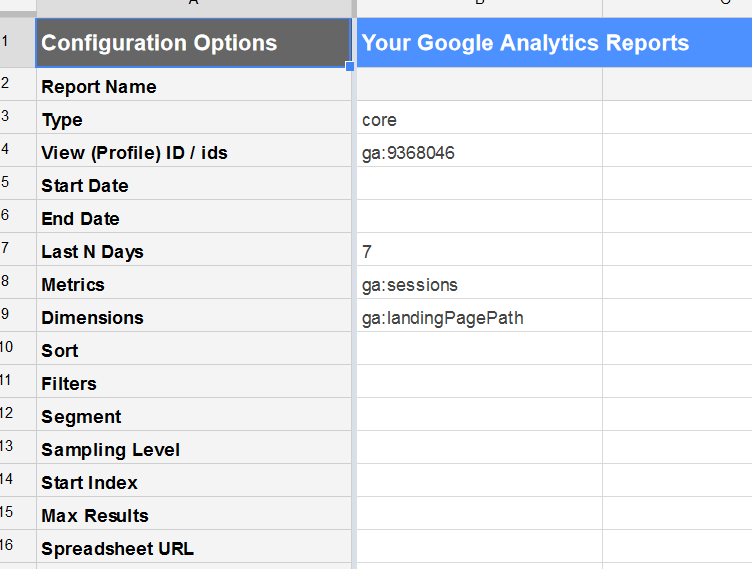

After creating the report you’ll see the following config sheet:

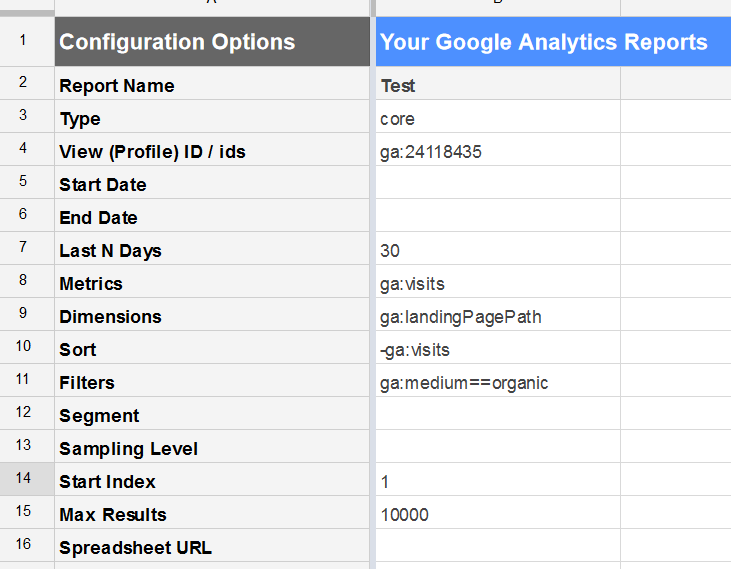

We’re then going to make the following changes:

You’ll notice I’ve set this to retrieve data based on the last 30 days (this can vary according to the size of the GA profile), added a sort of –ga:visits (to sort visits in descending order), and applied a filter of ga:medium==organic to retrieve organic data only.

You can retrieve a max number of 10,000 rows of data in one API call, and so we’ll need to combine this with the use of ‘Start Index’ to cycle through the data 10K rows at time.

Head back to the GA menu, this time selecting ‘run report’.

Once you have the data copy / paste into Excel, and convert into a table.

With your standard Screaming Frog crawl completed, export to Excel, convert to a table and add a column to execute a VLOOKUP to pull your organic traffic data across.

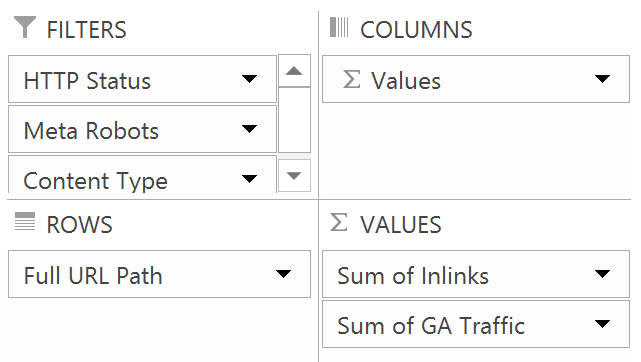

Convert into a pivot table with the following properties:

Add the following filters:

- HTTP Status = 200OK

- Meta Robots = blank or index, follow

- Content Type = text/html

This allows us to view and compare all indexable pages on the domain against the number of internal link to the URL and organic traffic.

You can then use this pivot table for a couple of approaches..

1. Sort ‘inlinks’ low to high vs 0 organic traffic

This can quickly identify redundant / legacy page types that may have been forgotten resulting in indexation bloat, or even unintentionally hoarding external link equity on the domain.

Pages that feature here often carry more traits associated with Panda, and so reviewing patterns in the URLs can help to resolve & prioritise wider conflicts i.e. categories with 0 products, indexed search results etc.

2. Sort ‘inlinks’ low to high excluding pages with 0 organic traffic

This will give you a standard high-level snapshot as to how visible a particular URL is on the domain based on the number of internal links.

If a page has few internal links but is still capable of driving organic traffic, we know that enough authority is being passed to the URL for it rank in a semi-meaningful position in Google. This can also be an indicator of lower competition levels for related organic searches, and so a real quick win opportunity exists just by increasing the on-site presence of associated URLs to funnel a little more link equity through.

3. Sort ‘organic traffic’ high to low

This gives a view of the top performing URLs which can also reveal some interesting opportunities. Broadly speaking these pages will tend to have a similar high level of internal links, but you’ll usually find a few that have disproportionately less that still feature in the top 10. There a number of factors both internal and external that may be influencing this (seasonality, links, industry/sector, type of site, level of content etc), but forgetting that for a second, from a solely ‘on-site’ view these pages on the surface at least are working a lot harder to achieve the same results. Increasing on-site visibility to a similar level as the other top performers, or even slightly will have a positive impact.

I can think of a few other ways this approach could be extended for more quick wins i.e. pulling external link metrics and assessing both inlinks and outlinks to ensure equity isn’t being ‘hoarded’ or locked away to a few areas of the site.

As SEOs and marketers, we can be put under a lot of pressure to achieve results, but it can be the seemingly lower effort and more subtle changes that have the biggest impact.